How AI Is Reshaping Product Management

The rapid emergence, innovation, and adoption of AI is changing how software product managers do their jobs — and the products they are responsible for delivering. These changes are unfolding across every facet of product development, and their impact on the product management discipline will be profound.

This moment warrants reflection: what is being disrupted, what remains durable, what is still unknown, and what assumptions we are implicitly making as we build AI‑enabled products. While the pace of change is extraordinary, many of these shifts are already visible in day‑to‑day product practice. Establishing a clear point of view on these changes helps anchor both near‑term execution and longer‑term strategy.

Three Ways AI Is Changing Product Work

1. Using AI to Build Products Faster

One of the most immediate impacts of AI is its ability to accelerate early‑stage product work. By treating use cases and requirements as inputs — the “fuel” — and GenAI tools as the engine, product managers can rapidly generate and refine PRDs, designs, and prototype experiences.

This capability improves development clarity while dramatically shortening the cycle from idea to artifact. PMs can express ideas more concretely, explore alternatives earlier, and help teams converge faster on viable solutions. The result is not less product thinking, but more leverage applied to it.

2. Building AI Into the Product

When AI becomes part of the product itself, the PM’s role shifts toward the deliberate design of intent capture and response mechanics. A core question emerges: what is the best way for a user to engage with this capability?

Possible interaction models include chat‑first experiences, traditional web‑based interfaces, file uploads, prompts initiated through Teams or email, or requests originating from other agents or systems. The choice of interaction model directly shapes how users frame their needs — and how the system interprets them.

Equally important is how the product responds. Should the output be text, visuals, recommendations, actions, or a sequence of agentic tasks? When generation is involved, PMs must be intentional about defining expectations and shaping outcomes so that responses align with user intent and business goals. AI expands the design space, but that flexibility demands precision.

3. Evolving Capabilities After Launch

Evolving AI‑based products introduces new challenges, particularly when it comes to understanding user behavior without explicit GUIs. Traditional signals — clicks, navigation paths, visible friction — may no longer exist. Instead, PMs must infer intent and satisfaction through prompts, responses, and behavioral patterns.

This raises difficult questions: how do we know when users are getting what they need? How do we detect confusion or failure when interactions are conversational rather than visual? Measuring success becomes less about observing actions and more about interpreting outcomes.

For products migrating from an existing web app or workflow experience, there is an opportunity to compare “from” and “to” states. Measuring existing jobs to be done and then comparing the time spent by agents or workflows to accomplish those same jobs becomes a critical metric. For AI‑native products, however, new evaluation frameworks will be required.

Why Use Cases Matter More Than Ever

Amid all this change, one constant becomes even more important: the use case. In fact, use cases matter more now than they did in traditional software development.

In classic product workflows, iterative UI reviews and sprint demos help keep vision and implementation aligned. AI‑driven products, particularly those with emerging UX/AX patterns, lack many of those stabilizing forces. Assumptions — always the enemy of requirements clarity — become even more dangerous when systems are generative and non‑deterministic.

Unlike human team members, AI cannot be “trained” over time through tacit knowledge and shared context. Product managers must therefore be far more explicit in shaping desired outcomes. Clear, crisp use cases anchor expectations and reduce ambiguity across product and engineering.

Equally important, PMs cannot stop at platform‑level use cases. To deliver real value, they must go one level deeper, defining solution‑specific prompts that capture the actual questions users need answered. These prompts often become the initial expression of key jobs to be done and form the basis for validating early releases. Striking the right balance between platform abstraction and solution specificity is essential.

Data: The New Center of Gravity for PMs

Once user questions are clearly understood, attention naturally turns to the data required to answer them. In AI‑enabled products, the familiar axiom “garbage in, garbage out” is more relevant than ever.

Whether data is sourced from the open internet or from proprietary enterprise assets, the user’s job to be done is directly dependent on the quality, structure, and accessibility of that data. In many cases, this requires additional layers of metadata and semantic clarity so that both humans and agents can interpret information consistently.

File systems, data warehouses, APIs, MCPs, and other technical connectors remain foundational. The systems that organize, prepare, and expose data are a critical — and often underestimated — part of the solution stack. Even if PMs do not own the data, once it powers their product, they own the problem, including usage rights, commercial considerations, refresh cycles, and ongoing upkeep.

From Capabilities to Outcomes: Designing AI‑Based Solutions

As PMs deepen their understanding of user jobs and data requirements, their work increasingly overlaps with solution design. This changes how final deliverables are conceived.

Historically, pixel‑perfect wireframes often represented the source of truth for a release. In AI‑driven systems, an agent may instead draw upon a collection of underlying capabilities — tools, skills, or MCPs — to perform a job. These capabilities become the raw ingredients that, when assembled correctly, produce the desired outcome.

Importantly, those outcomes can be tailored. Solutions may be specialized for particular teams, clients, or workflows, effectively customizing the “recipe” while reusing the same core ingredients. Scalability is no longer about forcing uniform workflows, but about enabling variation atop a shared foundation.

QA and Human‑in‑the‑Loop Validation

One of the most challenging aspects of AI product deployment is quality assurance. Meaningful QA often requires deep domain expertise, contextual understanding, and tacit knowledge — qualities that are difficult to automate.

Subject matter experts play a critical role in validating whether outputs align with expected results. They may begin with prompts representing key jobs to be done, but assessing correctness, relevance, and nuance must be led by individuals directly connected to the desired end state.

This phase is inherently iterative and human‑intensive. It also exposes a real trade‑off: the ability to ship quickly versus the effort required to fine‑tune outcomes. Over time, this iteration improves both the product and the underlying data assets, but it requires sustained commitment from the business.

Post‑Launch Pattern Recognition & Scaling

After launch, new patterns begin to emerge. As usage grows, PMs can identify recurring questions, common workflows, and opportunities to abstract bespoke solutions into more scalable capabilities.

In many AI‑driven products, this abstraction happens after delivery rather than before MVP. Rapid prototyping surfaces real‑world signals that are difficult to predict through upfront architectural planning alone. As more users onboard, feedback becomes clearer and easier to separate from noise.

PMs will also uncover questions that the system cannot yet answer. These gaps often point directly toward the next wave of capability development, guiding both platform evolution and data investment.

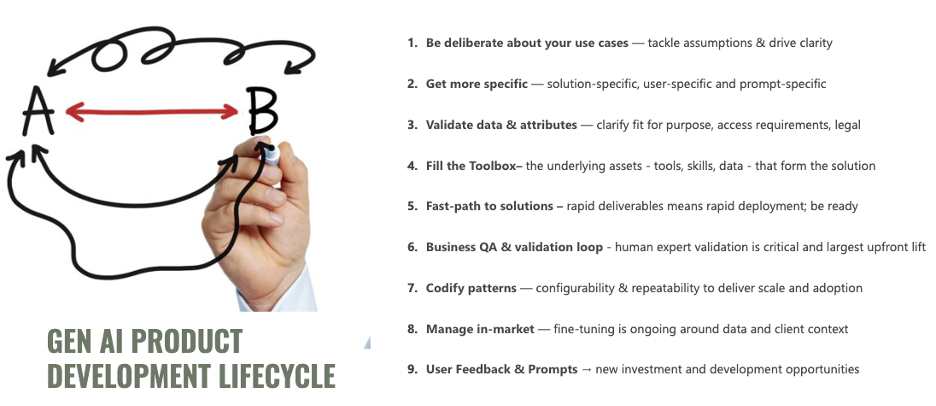

The GenAI Product Development Lifecycle

The Big Unknowns in 2026

Despite rapid progress, several fundamental questions remain unresolved as AI moves deeper into enterprise software.

How much agency will end users have in assembling their own solutions? Will they construct workflows and agents themselves, or will organizations constrain customization to preserve governance and consistency?

How will agentic systems change established delivery models? Recurring artifacts such as performance reports may persist — or they may be replaced by agents that act immediately on insights, coordinating across teams without human intervention.

Finally, how will agents fit into organizational frameworks with defined roles, responsibilities, and permissions? Questions of authentication, data privileges, and cross‑agent interaction likely belong at the platform layer, yet must operate seamlessly across multiple agent planes and contexts.